mobile-use-setup

AI agents can now use real Android and iOS apps, just like a human.

npx skills add https://github.com/minitap-ai/mobile-use --skill mobile-use-setupInstale esta skill com a CLI e comece a usar o fluxo de trabalho SKILL.md em seu espaço de trabalho.

mobile-use: automate your phone with natural language

Mobile-use is a powerful, open-source AI agent that controls your Android or IOS device using natural language. It understands your commands and interacts with the UI to perform tasks, from sending messages to navigating complex apps.

Mobile-use is quickly evolving. Your suggestions, ideas, and reported bugs will shape this project. Do not hesitate to join in the conversation on Discord or contribute directly, we will reply to everyone! ❤️

✨ Features

- 🗣️ Natural Language Control: Interact with your phone using your native language.

- 📱 UI-Aware Automation: Intelligently navigates through app interfaces (note: currently has limited effectiveness with games as they don't provide accessibility tree data).

- 📊 Data Scraping: Extract information from any app and structure it into your desired format (e.g., JSON) using a natural language description.

- 🔧 Extensible & Customizable: Easily configure different LLMs to power the agents that power mobile-use.

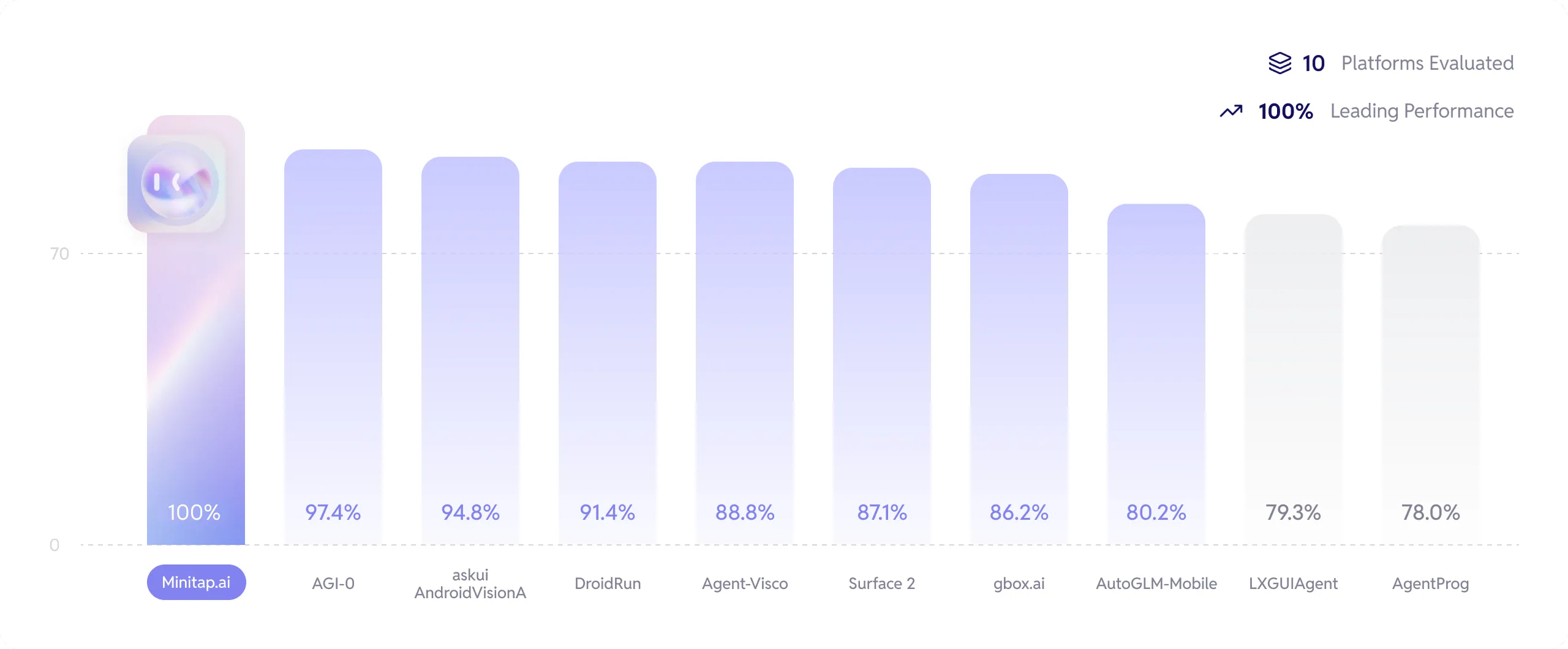

Benchmarks

We stand as the top performers and the first to have completed 100% of the AndroidWorld benchmark.

Get more info about how we reached this milestone here: Minitap Benchmark.

The official leaderboard is available here.

Check out our research paper here.

🚀 Getting Started

Ready to automate your mobile experience? Follow these steps to get mobile-use up and running.

🌐 From our Platform

Easiest way to get started is to use our Platform.

Follow our Platform quickstart to get started.

🛠️ From source

-

Set up Environment Variables:

Copy the example.env.examplefile to.envand add your API keys.cp .env.example .env -

(Optional) Customize LLM Configuration:

To use different models or providers, create your own LLM configuration file.cp llm-config.override.template.jsonc llm-config.override.jsoncThen, edit

llm-config.override.jsoncto fit your needs.You can also use local LLMs or any other openai-api compatible providers :

- Set

OPENAI_BASE_URLandOPENAI_API_KEYin your.env - In your

llm-config.override.jsonc, setopenaias the provider for the agent nodes you want, and choose a model supported by your provider.

[!NOTE]

If you want to use Google Vertex AI, you must either:- Have credentials configured for your environment (gcloud, workload identity, etc…)

- Store the path to a service account JSON file as the GOOGLE_APPLICATION_CREDENTIALS environment variable

More information: - Credential types - google.auth API reference

- Set

Quick Launch (Docker)

[!NOTE]

This quickstart, is only available for Android devices/emulators as of now, and you must have Docker installed.

First:

- Either plug your Android device and enable USB-debugging via the Developer Options

- Or launch an Android emulator

Then run in your terminal:

- For Linux/macOS:

chmod +x mobile-use.sh

bash ./mobile-use.sh \

"Open Gmail, find first 3 unread emails, and list their sender and subject line" \

--output-description "A JSON list of objects, each with 'sender' and 'subject' keys"

- For Windows (inside a Powershell terminal):

powershell.exe -ExecutionPolicy Bypass -File mobile-use.ps1 `

"Open Gmail, find first 3 unread emails, and list their sender and subject line" `

--output-description "A JSON list of objects, each with 'sender' and 'subject' keys"

[!NOTE]

If using your own device, make sure to accept the ADB-related connection requests that will pop up on your device.

🧰 Troubleshooting

The script will try to connect to your device via IP.

Therefore, your device must be connected to the same Wi-Fi network as your computer.

1. No device IP found

If the script fails with the following message:

Could not get device IP. Is a device connected via USB and on the same Wi-Fi network?

Then it couldn't find one of the common Wi-Fi interfaces on your device.

Therefore, you must determine what WLAN interface your phone is using via adb shell ip addr show up.

Then add the --interface <YOUR_INTERFACE_NAME> option to the script.

2. Failed to connect to <DEVICE_IP>:5555 inside Docker

This is most probably an issue with your firewall blocking the connection. Therefore there is no clear fix for this.

3. Failed to pull GHCR docker images (unauthorized)

Since UV docker images rely on a ghcr.io public repositories, you may have an expired token if you used ghcr.io before for private repositories.

Try running docker logout ghcr.io and then run the script again.

Manual Launch (Development Mode)

For developers who want to set up the environment manually:

1. Device Support

Mobile-use currently supports the following devices:

- Physical Android Phones: Connect via USB with USB debugging enabled.

- Android Simulators: Set up through Android Studio.

- iOS Simulators: Supported for macOS users.

[!NOTE]

Physical iOS devices are not yet supported.

2. Prerequisites

For Android:

- Android Debug Bridge (ADB): A tool to connect to your device.

For iOS (macOS only):

-

Xcode: Apple's IDE for iOS development.

-

fb-idb: Facebook's iOS Development Bridge for device automation.

# Install via Homebrew (macOS) brew tap facebook/fb brew install idb-companion[!NOTE]

idb_companionis required to communicate with iOS simulators. Make sure it's in your PATH after installation.

Common requirements:

Before you begin, ensure you have the following installed:

- uv: A lightning-fast Python package manager.

3. Installation

-

Clone the repository:

git clone https://github.com/minitap-ai/mobile-use.git && cd mobile-use -

Create & activate the virtual environment:

# This will create a .venv directory using the Python version in .python-version uv venv # Activate the environment # On macOS/Linux: source .venv/bin/activate # On Windows: .venv\Scripts\activate -

Install dependencies:

# Sync with the locked dependencies for a consistent setup uv sync

👨💻 Usage

To run mobile-use, simply pass your command as an argument.

Example 1: Basic Command

python ./src/mobile_use/main.py "Go to settings and tell me my current battery level"

Example 2: Data Scraping

Extract specific information and get it back in a structured format. For instance, to get a list of your unread emails:

python ./src/mobile_use/main.py \

"Open Gmail, find all unread emails, and list their sender and subject line" \

--output-description "A JSON list of objects, each with 'sender' and 'subject' keys"

[!NOTE]

If you haven't configured a specific model, mobile-use will prompt you to choose one from the available options.

🔎 Agentic System Overview

This diagram is automatically updated from the codebase. This is our current agentic system architecture.

❤️ Contributing

We love contributions! Whether you're fixing a bug, adding a feature, or improving documentation, your help is welcome. Please read our Contributing Guidelines to get started.

⭐ Star History

🏆 Attribution & Licensing

mobile-use is the first agentic framework to achieve 100% on the AndroidWorld benchmark.

This project is licensed under the Apache License 2.0.

If you use this code, or are inspired by the architecture used to reach our benchmark results, we kindly request that you credit Minitap, Inc.

How to Cite

If you use this work in research or a commercial product, please use the following:

Pierre-Louis Favreau, Jean-Pierre Lo, Clement Guiguet, Charles Simon-Meunier,

Nicolas Dehandschoewercker, Allen G. Roush, Judah Goldfeder, Ravid Shwartz-Ziv.

Do Multi-Agents Dream of Electric Screens? Achieving Perfect Accuracy on AndroidWorld Through Task Decomposition.

arXiv preprint arXiv:2602.07787 (2026).

https://arxiv.org/abs/2602.07787

Bibtex

@misc{favreau2026multiagentsdreamelectricscreens,

title = {Do Multi-Agents Dream of Electric Screens? Achieving Perfect Accuracy on AndroidWorld Through Task Decomposition},

author = {Pierre-Louis Favreau and Jean-Pierre Lo and Clement Guiguet and Charles Simon-Meunier and Nicolas Dehandschoewercker and Allen G. Roush and Judah Goldfeder and Ravid Shwartz-Ziv},

year = {2026},

eprint = {2602.07787},

archivePrefix= {arXiv},

primaryClass = {cs.AI},

url = {https://arxiv.org/abs/2602.07787}

}